Enterprise AI Agent Development: How Custom AI Agents Integrate with Your Existing Systems

In Hong Kong, more and more business executives are raising AI agent development in procurement discussions — yet decision-makers who truly understand how it differs from chatbots and RPA remain in the minority. This gap in understanding often leads enterprises to either underestimate the actual capabilities of AI Agents, or to discover after project initiation that their existing infrastructure cannot support deployment.An AI Agent is not a smarter question-and-answer tool. It is a business automation execution layer capable of autonomously planning, calling tools, and executing multi-step tasks. Its value lies not in "conversation" but in "getting work done" — processing complex business workflows across systems without requiring step-by-step human intervention, forming a complete closed loop from receiving instructions to delivering output.AI Agents, RPA, and Traditional Automation: The Fundamental DifferencesFor many enterprises, the first obstacle when evaluating AI Agents is conceptual confusion. RPA (Robotic Process Automation) executes pre-defined fixed operation paths — the moment it encounters a page change or process exception, the system breaks down. Traditional chatbots can only respond within pre-set conversation trees and cannot actively execute back-end operations.The fundamental difference with AI Agents lies in autonomy and adaptability. An AI Agent can independently break down steps according to task objectives, assess the current state, choose which tools to call, and dynamically adjust its execution path when exceptions arise — rather than relying on humans to reset the rules. For enterprise scenarios with high process complexity and frequently changing business rules, this difference in capability produces an efficiency gap of an entirely different magnitude.It is worth noting that AI Agents are not suited to every automation scenario. For operations with highly fixed rules and rarely changing processes, RPA is lower cost and simpler to maintain. Accurately defining the use case before entering project initiation is the first step in avoiding resource misallocation.What Does a Deployable AI Agent Tech Stack Require?From an engineering perspective, the technical architecture for enterprise-grade AI agent development is divided into three layers. Without any one of them, an Agent cannot operate reliably in a production environment.The model layer determines the upper limit of an Agent's reasoning capability. Different tasks place different demands on models — complex multi-step reasoning and document analysis suit GPT-5; cost-sensitive, high-concurrency structured processing scenarios are better served by DeepSeek-V3; workflows involving image generation or multimodal input require the integration of Stable Diffusion. Relying on a single model to cover all scenarios both wastes cost and underperforms on specific tasks. A multi-model hybrid architecture is the practical choice for enterprise deployment.The workflow engine layer is the true dividing line between "being able to do AI" and "being able to deliver enterprise AI." It is responsible for task decomposition logic, step sequencing, tool-calling mechanisms, exception branch handling, and the design of human intervention nodes — meaning the conditions under which an Agent should pause and await human confirmation rather than continue executing autonomously. Vendors without a mature workflow engine typically deliver a system that runs smoothly in a demo environment but proves fragile in production.The system connectivity layer determines whether an Agent can truly integrate into an enterprise's existing operations. An Agent must be able to read and write ERP data, update CRM records, query financial systems, and trigger approval workflows via API. The depth of integration at this layer directly determines the actual business value an Agent creates for the enterprise.Five High-Value Deployment Scenarios for Enterprise AI AgentsNo matter how clear the concept, concrete scenarios are always more compelling. The following five areas represent the AI Agent application types with the strongest procurement intent among Hong Kong enterprises in 2025 to 2026:1.Financial compliance review automation: An Agent automatically reads the latest regulatory documents, cross-references internal institutional policies, flags discrepancies, and generates structured reports — replacing manual page-by-page review and directly addressing the compliance pressures faced by SFC- and HKMA-regulated institutions.2.Procurement approval workflows: Automatically verifies whether procurement requests comply with budget rules and supplier qualifications, routes requests according to approval tiers, and maintains a full auditable record throughout.3.Healthcare resource scheduling optimisation: Under multiple constraint conditions — staff qualifications, patient priority, equipment availability — an Agent generates optimal scheduling plans in real time and automatically updates them as conditions change.4.Customer service triage and handling: Automatically determines query type, directly handles standard requests, routes complex or high-risk cases to human agents, and simultaneously updates CRM records to reduce repetitive manual operations.5.Cross-system data consistency maintenance: When ERP, CRM, and financial systems hold inconsistent data for the same client, an Agent automatically identifies discrepancies, triggers a verification process, and records the outcome — replacing manual periodic reconciliation.Integrating Legacy Systems: The Technical Obstacle Enterprises Most Commonly UnderestimateIn practice, the complexity of integrating existing systems often consumes more engineering resources than developing the Agent itself.An API-first approach is the most robust integration strategy. Leading ERP systems such as SAP and Oracle both provide standard API interfaces, making most integrations technically feasible. The real challenge lies in the completeness of interface documentation and version stability. During the integration planning phase, a detailed feasibility assessment of each target system's API capability is required — rather than assuming that "having an API means it can connect."For legacy systems without modern APIs, connectivity can be addressed through direct database connections, RPA bridge layers, or middleware adapters. However, these solutions carry higher maintenance costs and must be factored into long-term architectural decisions.Additionally, any enterprise Agent deployment must incorporate adequate sandbox testing environments and rollback mechanisms. When an Agent encounters an anomaly in production, the system should automatically degrade to human-handled processing mode rather than allowing erroneous operations to propagate through core business systems.If your team is still evaluating the technical direction — from "whether to introduce AI workflow automation" to "how to choose the right custom development approach" — [Enterprise Generative AI Solutions: From General-Purpose Tools to Deeply Customized Workflows] maps out the applicable boundaries between custom workflows and general-purpose tools from a business requirements perspective, and can serve as a reference framework before formulating your AI deployment strategy.Frequently Asked QuestionsQ: How long does AI Agent deployment take? For a well-scoped single-scenario Agent, the time from requirements confirmation to production deployment is typically four to eight weeks. For multi-agent collaborative systems or projects involving complex legacy system integration, a phased delivery approach is recommended — completing the MVP for the core scenario first, then progressively expanding the collaborative scope, to reduce overall project risk.Q: Can an AI Agent be deployed entirely on a company's private servers without connecting to the public cloud? Yes. For institutions regulated by SFC, HKMA, or PDPO where data cannot leave the jurisdiction, private on-premise deployment is the standard approach. Mainstream models including GPT-5 and DeepSeek-V3 both support private deployment, but this requires a development vendor with the appropriate infrastructure configuration experience to execute correctly.Q: How do you prevent an AI Agent from performing erroneous operations in a production environment? The core mechanisms operate on three levels: setting human review nodes during workflow design, with mandatory human confirmation required for high-risk operations such as financial transfers or contract generation; designing operation logs and rollback capability at the system architecture level; and simulating various exception scenarios during testing to ensure the Agent correctly degrades rather than erroneously executes under boundary conditions.Q: How do AI Agents perform in Hong Kong enterprises' mixed English and Traditional Chinese environments? Modern LLMs have reached a high level of support for Traditional Chinese. However, for scenarios involving Hong Kong-specific regulatory terminology, industry abbreviations, or written Cantonese conventions, targeted optimisation at the prompt engineering and model fine-tuning level is still required, rather than relying on the default behaviour of a general-purpose model.GTS provides enterprise-grade AI agent development and custom development services for large enterprises in Hong Kong and the Greater Bay Area. By integrating GPT-5, DeepSeek-V3, Stable Diffusion, and other leading models — combined with a proprietary Agent and workflow engine — GTS covers full-cycle deployment requirements for regulated industries including financial services, healthcare, and industrial IoT. All projects support private on-premise deployment, source code is transferred in full to the client, and GTS has direct delivery experience under Hong Kong's SFC, HKMA, and PDPO compliance frameworks. To learn how an enterprise workflow automation solution can be deployed in your specific business scenario, contact a GTS technical consultant to arrange an initial discussion.If you already have a clear business pain point but are uncertain whether an AI Agent is the most appropriate solution — feel free to describe the scenario directly to us. GTS provides a no-pressure initial feasibility assessment to help you clarify the technical boundaries and realistic engineering scope expectations before project initiation.This article, "Enterprise AI Agent Development: How Custom AI Agents Integrate with Your Existing Systems" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-51/

A Complete Guide to Trading System Development: Order Management, Clearing & Settlement, and Low-Latency Architecture

For technology decision-makers at Hong Kong financial institutions, trading system development has never been a purely engineering concern. Whether a system can handle concurrent multi-asset trading, meet regulatory technical requirements, and remain stable as business scales — the answers to these questions directly determine an institution's competitive position in the market. This article works through the core issues that enterprises must clarify when building a financial trading system, from order management architecture and clearing and settlement design, to engineering choices for low-latency systems.1. How Regulatory Upgrades Are Redefining the Baseline for System DevelopmentBefore examining architectural details, it is worth first understanding Hong Kong's current regulatory context.The Securities and Futures Commission (SFC) authorises Automated Trading Services (ATS) under Part III of the Securities and Futures Ordinance (SFO), and requires relevant institutions to meet defined standards in areas including system capacity planning, stress testing, and abnormal trading surveillance — all of which are addressed in the SFC's Guidelines for Regulation of Automated Trading Services. In parallel, the Hong Kong Monetary Authority (HKMA) Supervisory Policy Manual module TM-G-1 (General Principles for Technology Risk Management) sets clear expectations for financial institutions regarding system development lifecycle management, change control, and disaster recovery planning.The practical implication of these requirements is this: compliance capability must be built into the architecture from the outset, not retrofitted later. Institutions that overlook the technical compliance foundation at the early stages of system development frequently encounter far greater costs when they reach the licensing application or regulatory review stage.2. Order Management System: The Central Nervous System of the Trading ChainWhen planning financial trading infrastructure, many institutions underestimate the pivotal role of the Order Management System (OMS) within the overall architecture. An OMS is not simply an "order recording tool" — it is the core coordination layer connecting the quoting engine, pre-trade risk validation, matching engine, and clearing and settlement.A well-designed OMS must be capable of handling several categories of critical business logic:Order Routing and Execution Strategy: When operating across different markets — Hong Kong equities, US equities, derivatives, and virtual assets — the routing rules, partial fill handling logic, and market connectivity protocols each differ. The OMS must support flexible multi-asset, multi-market configuration through a unified interface, rather than relying on multiple isolated systems maintained in parallel.Pre-Trade Risk Embedding: Effective risk control does not intervene after matching — it validates before orders enter the matching engine. The OMS must have built-in position limits, capital adequacy checks, and abnormal order interception mechanisms, ensuring every order is already compliant with the institution's defined risk parameters before it reaches the market.Audit Trail Completeness: From order creation, amendment, and rejection through to final execution, the OMS should record timestamps at every status node. This supports regulatory compliance requirements for transaction traceability and provides a reliable data foundation for internal audit purposes.For a deeper look at how the matching engine and risk modules are co-designed within an enterprise architecture, readers may wish to refer to our earlier article, Securities Trading System Customisation: “Custom Securities Trading System Development: From Matching Engine to Risk and Clearing Integration”, and is well-suited for technical leads currently conducting system architecture evaluations.3. Clearing and Settlement System: Key Design Considerations for Broker-Side Intermediate LayersClearing and settlement is frequently the most underestimated component in financial trading system development. Many institutions assume that connecting to CCASS or OTC Clear completes their post-trade processing requirements. In practice, a broker-level clearing intermediate layer is a distinct and necessary engineering project in its own right.In Hong Kong's market environment, an institution's self-built clearing layer typically needs to cover the following functional modules:DvP (Delivery versus Payment) Logic: This ensures securities and funds are exchanged simultaneously, eliminating the settlement risk that arises from one-sided failures. Within the T+2 settlement cycle, the system must track the status of every pending settlement in real time, and trigger predefined exception-handling flows upon settlement failure.Margin Calculation Engine: For businesses involving derivatives or leveraged trading, the system must calculate each account's margin level in real time and automatically initiate margin call notifications or forced liquidation procedures when threshold levels are reached. The accuracy and real-time performance of this component directly affects the institution's ability to manage credit risk exposure.Regulatory Reporting Interface: The clearing system must provide standardised data output interfaces to support periodic filings and real-time reporting requirements submitted to HKEX, the SFC, or the HKMA — removing any reliance on the fragile practice of manually exporting compliance reports.4. Low-Latency Trading System: The Architecture Decisions That Define the Boundaries of Competitive AdvantageLow latency is not a universal requirement, but for institutions engaged in algorithmic trading, quantitative strategy execution, or cross-market arbitrage, differences at the microsecond level translate directly into strategy profitability. When planning a low-latency trading system architecture, the following engineering decisions are among the most consequential:Co-location Strategy: Deploying the core execution nodes of the trading system within the same data centre facility as the exchange is the most direct and effective means of reducing round-trip network latency. Hong Kong Exchanges and Clearing (HKEX) offers co-location services that allow institutions to deploy servers directly alongside HKEX's own infrastructure, enabling round-trip latency to be held within single-digit millisecond ranges.Event-Driven Architecture vs. Polling: For scenarios such as order status updates and market data consumption, an event-driven architecture can significantly reduce unnecessary CPU utilisation and response latency. By contrast, polling introduces additional timing jitter under high-frequency conditions and is not suited to latency-sensitive trading paths.Kernel Bypass Technology: In extreme low-latency scenarios, bypassing the operating system kernel for network I/O — through technologies such as DPDK or RDMA — can eliminate tens to hundreds of microseconds of system call overhead. Implementing such technologies requires deep expertise in the underlying network stack and is generally not advisable for non-specialist teams to attempt independently.It is also worth noting that "low latency" and "high-frequency trading (HFT)" carry meaningfully different engineering requirements. When planning quantitative trading system infrastructure, institutions should first establish the execution frequency and order flow characteristics of their strategies, then select the appropriate technical approach accordingly — avoiding the trap of applying HFT-level engineering complexity to support mid-to-low frequency strategies that in practice only require millisecond-level responsiveness.5. AI-Assisted Development: How GTS Shortens the Delivery Cycle for Enterprise Trading SystemsThroughout the construction of all the modules described above, development efficiency and system reliability are equally important dimensions for enterprise decision-makers to weigh. GTS has integrated AI-assisted development capabilities into the delivery process for enterprise-grade trading systems — spanning requirements analysis, automated generation of architecture documentation, code review, and intelligent test case coverage. The introduction of AI tooling allows the development cycle for complex financial systems to be shortened by approximately 30% to 40%, while maintaining the documentation completeness and traceability standards required by Hong Kong's financial regulatory environment.This AI-efficiency-driven development model is particularly valuable for institutions needing to rapidly deploy new business lines — such as virtual asset trading or cross-border derivatives clearing — enabling enterprises to achieve a more competitive market entry window without compromising system quality.Is your institution's trading system ready for the next phase of business growth? Whether you are at the early stage of system evaluation or already have a defined module upgrade plan, GTS's technical advisory team can provide customised consulting and system design tailored to the Hong Kong market. Submit your requirements and we will arrange a dedicated technical discussion within two business days.Fully considered, trading system development is never a matter of assembling isolated modules — it is the organic integration of order management, clearing and settlement, low-latency execution, and compliance architecture. In a financial centre as regulatory-precise and competitively concentrated as Hong Kong, every architectural decision deserves careful scrutiny before implementation.This article, "A Complete Guide to Trading System Development: Order Management, Clearing & Settlement, and Low-Latency Architecture" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-50/

Hospital Information System Integration Checklist: 6 Steps for Hong Kong Private Hospitals to Connect LIS, RIS, Pharmacy and EHR

For many IT managers at Hong Kong private hospitals, system integration is a more daunting challenge than the procurement decision itself. Laboratory, radiology, and pharmacy systems operate in silos; clinical staff must switch between multiple interfaces to access information; medication order execution status cannot be synchronised in real time; and patient records appear duplicated or incomplete across systems. These issues not only affect clinical efficiency, but following the enactment of the Electronic Health Record Sharing System (Amendment) Ordinance 2025, they now directly implicate an institution's regulatory compliance obligations.This article takes a practical approach to outlining 6 key steps for Hospital Information System integration, helping Hong Kong private hospitals complete the connection of LIS, RIS, pharmacy, and electronic health records without disrupting daily operations, while meeting eHealth+ interoperability requirements.The Root Cause of Integration Failures Is Rarely the Technology ItselfWhen hospitals begin a Health Information Management System integration project, they tend to frame the problem as a technical challenge. In practice, however, the most common reason projects fail is inadequate upfront preparation. There are three sources of complexity unique to Hong Kong's private healthcare environment, and understanding them is a prerequisite for moving forward successfully:Subsystems from different eras and different vendors, each with its own data structures and interface standards;eHealth+ compliance requirements that impose clear obligations around the completeness and timeliness of data submissions;Limited in-house IT capacity, which frequently causes integration projects to stall during the testing phase.Step 1: System Inventory — Essential Groundwork Before You BeginBefore a single line of interface code is written, a thorough inventory of the current system landscape must be completed. This includes documenting the version numbers, database types, and API documentation completeness of all existing subsystems, as well as determining whether each system's data output conforms to industry-standard protocols such as HL7 v2.x, FHIR, or DICOM, or relies on proprietary formats.The value of this inventory lies in identifying high-risk interfaces — the connection points where the greatest disparity in data standards exists and where integration problems are most likely to arise. In most cases, the LIS laboratory results return pathway and the pharmacy medication order execution pathway represent the highest-priority integration targets from a clinical safety perspective.Step 2: Technical Standards and Common Pitfalls Across Six Core InterfacesWhen advancing HIMS Hospital Information Management System integration in a Hong Kong private hospital, the following six interfaces are the most critical, each with its own characteristic implementation challenges in the local context:LIS Laboratory Information System: The most common issue is inconsistency in HL7 ORU message format versions, which prevents laboratory results from being automatically returned to the main system.RIS Radiology Information System: Delays in matching HL7 ORM scheduling instructions with the RIS Worklist frequently cause radiology workflows to fall out of sync with clinical orders.PACS Picture Archiving and Communication System: When DICOM image viewing permissions are not integrated with the HIS user role framework, imaging silos form and physicians are unable to complete image review within a single interface.PIS Pharmacy Information System: The failure to synchronise medication order execution status to the main system in real time is a primary source of duplicate dispensing risk and a recurring finding in hospital accreditation reviews.EHR and eHealth+ Upload Interface: Incomplete data field mapping is the most common cause of compliance failure. The Hospital Authority's relevant guidelines explicitly require that health data submitted to eHealth+ conform to specified data element standards. Where the HIS does not fully map to these fields at the design stage, the cost of remediation is substantial.Patient CRM and Appointment System: Inconsistent Patient Master Indexes across systems cause the same patient to appear as multiple separate records, significantly undermining the continuity of medical records and the accuracy of data analytics.Step 3: Data Migration — Preserving Historical Records Is a Non-Negotiable ResponsibilityData migration is the phase in which institutions can least afford errors. The recommended approach is incremental rolling migration combined with a dual-write mechanism, rather than a one-time full cutover. During the parallel operation period, data is written simultaneously to both the old and new systems; only once incremental data validation has confirmed stability should writes to the legacy system be terminated.Pre-migration data cleansing is equally essential: identifying and correcting duplicate records, missing fields, and formatting errors in historical data is far less costly than remediation after migration. Upon completion, a data reconciliation report should be produced, comparing record counts between the source and target systems to ensure completeness is auditable.Step 4–5: Parallel Testing and Phased Cutover — The Core Strategy for Risk IsolationIn any system integration project, the commitment to uninterrupted operations must be delivered through architectural design, not left to chance.The parallel testing phase should involve at least four weeks of end-to-end simulation in an isolated sandbox environment. Test scenarios should cover peak outpatient concurrency, cross-system data synchronisation latency, and end-to-end validation of eHealth+ uploads. Emergency rollback trigger conditions must be defined in advance to ensure that, in the event of a P0-level fault, the system can be restored to its pre-cutover state within the agreed timeframe.For the formal cutover, a department-priority phased rollout strategy is recommended: begin with departments that carry lower operational volume, such as health check centres, accumulate stable performance data, and then progressively extend to higher-risk departments such as the emergency department and ICU. This approach distributes integration risk across the timeline and prevents a single point of failure from affecting the entire hospital.When planning a phased go-live strategy, the quality of upfront requirements scoping almost always determines how smoothly execution proceeds. GTS's previously published article, “Hospital Information Management System Custom Development Process: From Requirements Gathering to Go-Live”, provides a detailed breakdown of resource allocation across each phase from initial planning to formal delivery, and is recommended reading before initiating an integration project.Step 6: Post-Launch Integration Stability MonitoringFor most integration projects, documentation stops on go-live day. In reality, the first 90 days after launch represent the most vulnerable period for integration stability.Ongoing monitoring should cover the following key indicators: interface message queue backlog, cross-system data synchronisation latency, eHealth+ upload success rate, and clinical staff system error rate. A tiered alerting mechanism should also be established to distinguish between critical faults requiring immediate human intervention and lower-priority anomalies that can be addressed during scheduled maintenance windows.In addition, a quarterly integration audit is recommended to proactively review the completeness and field accuracy of eHealth+ data submissions, identifying and resolving potential gaps before any regulatory compliance review.The Key to Successful Integration Is Getting It Right From the StartHospital Information System integration is fundamentally a precisely planned systems engineering undertaking. The quality of preparation at each step directly determines the difficulty of the next. For Hong Kong private hospitals operating with limited resources, choosing a technology partner with local healthcare compliance expertise and deep familiarity with eHealth+ interface architecture delivers far greater long-term value than minimising upfront advisory costs.GTS specialises in providing Health Information Management System custom development services for healthcare institutions in Hong Kong and the Greater Bay Area, and has delivered end-to-end integration projects for leading Hong Kong private hospitals encompassing LIS, RIS, pharmacy, electronic health records, and eHealth+ interfaces. If you are currently evaluating the feasibility of system integration at your institution, we welcome you to submit your current system landscape and integration objectives via the link below. Our technical consultants will arrange an initial assessment meeting within two business days: [Submit Your System Integration Assessment Request Now].This article, "Hospital Information System Integration Checklist: 6 Steps for Hong Kong Private Hospitals to Connect LIS, RIS, Pharmacy and EHR" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-49/

How to Choose a Custom AI Development Company in Hong Kong: 8 Questions Every Enterprise Must Ask Before Signing a Contract

Choosing the right partner for custom AI development or enterprise AI application development in Hong Kong is one of the highest-risk procurement decisions a business executive will face in 2026. The cost of choosing the wrong vendor means delayed delivery, uncontrolled costs, source code held hostage, and AI systems that cannot integrate with your existing business infrastructure.This guide gives Hong Kong enterprise decision-makers 8 core questions covering technical capability, regulatory credentials, delivery guarantees, and contract terms — to help you accurately assess the true capability of every AI development vendor before committing any budget.1. Why Most Hong Kong Enterprises Choose the Wrong AI Development VendorIn Hong Kong, custom AI application development project failures are far from rare. Decision-makers typically identify three common failure patterns after the fact.The three highest-cost mistakes in enterprise AI vendor selection:First, the source code trap. Many vendors deliver a functioning AI application but retain ownership of the underlying code, leaving clients permanently dependent on the original developer for updates, fixes, and feature iterations — at prices set unilaterally by the vendor.Second, the compliance blind spot. The vast majority of AI development companies operating in global markets have no substantive knowledge of Hong Kong's regulatory environment — unfamiliar with SFC requirements for AI-assisted advisory systems, HKMA model risk management guidelines, PDPO personal data protection obligations, or eHealth integration standards. Vendors who do not understand these frameworks will deliver AI systems that cannot pass compliance review.Third, the timeline illusion. Delivery plans that lack structured milestones are the leading cause of AI project failure. Without contractually defined checkpoints, "three months to completion" routinely becomes six months, then twelve.2. The 8 Questions You Must Ask Any AI Development Vendor Before SigningQ1: After project delivery, who owns the source code, technical documentation, and model weights?This is a non-negotiable baseline. The contract must explicitly state that all source code, technical documentation, and any fine-tuned model weights are transferred 100% to your organisation upon final delivery. Any vendor who hedges on this question — or proposes a licensing model — is building a dependency trap. A genuinely trustworthy custom AI development partner has no reason to retain your code.Q2: Can the AI system be deployed entirely on our private infrastructure, with no data leaving our network?For any regulated enterprise in Hong Kong — including financial institutions under SFC or HKMA oversight, healthcare providers handling patient data, and all organisations subject to PDPO — this question determines which vendors are non-starters. Private on-premise deployment of large language models such as GPT-5 or DeepSeek-V3 is technically entirely feasible, but only a small number of AI application development service providers have the infrastructure experience to execute it correctly. Require a clear technical proposal, not a sales assurance.Q3: What is the guarantee mechanism for the MVP delivery timeline? How are milestone checkpoints defined in the contract?For a well-scoped AI application development project, completing a functional MVP within thirty days is an achievable target. Require the vendor to map out every milestone: requirements confirmation, prototype delivery, integration testing, user acceptance testing, and production deployment. If a vendor cannot commit to a milestone structure in writing, treat this as a warning signal. Vague timelines protect the vendor, not the client.Q4: Which AI models do you integrate, and what is the selection rationale for our specific use case?A technically credible custom AI development company should be able to clearly articulate — across dimensions of cost, latency, data residency requirements, and multilingual capability — when to use GPT-5, when to use DeepSeek-V3, and when to use open-source models, and why. If a vendor recommends the same model for every scenario without analysis, they are optimising their own workflow, not your business outcome. Enterprise-grade AI application development requires a multi-model strategy, not a one-size-fits-all deployment.Q5: Do you have practical experience delivering AI projects under Hong Kong SFC, HKMA, or PDPO compliance frameworks?This question immediately separates local expertise from global generalisation. Any vendor lacking direct delivery experience within Hong Kong's regulatory frameworks — including SFC requirements for AI-assisted advisory systems, HKMA model risk management guidelines, and PDPO data handling obligations — will add compliance risk to your project rather than reduce it. Require specific case examples, not general statements about "regulatory awareness."Q6: Can your AI Agent development capability integrate with our existing ERP, CRM, or legacy systems?Modern enterprise AI is not built in isolation. Whether deploying an AI Agent automation solution, a document processing system, or a predictive analytics platform, the system must connect to your existing SAP, Oracle, or legacy core business systems through clean API architecture. Require the vendor to describe — technically, not conceptually — how they achieved this type of integration in a previous engagement. A vendor without legacy system integration case studies is asking you to be their first experiment.Q7: What is the post-delivery support SLA? How are system failures, model performance degradation, and update iterations handled?AI systems in production degrade. Models drift. When upstream systems update, integration interfaces break. A responsible custom AI development partner will define a post-delivery support SLA in the contract, covering response times, fault resolution windows, model performance monitoring mechanisms, and the process for requesting enhancements. If a vendor treats post-delivery support as a secondary consideration during contract negotiation, they will treat it the same way in production.Q8: What triggers cost overruns in your pricing model? How are scope changes managed in the contract?Cost overruns in AI application development almost always originate from three sources: poorly defined requirements scope, uncontrolled model API usage costs, and data pipeline complexity underestimated at project initiation. A transparent vendor will walk through each of these risk items in advance, explain their change request process, and provide a contract structure that protects you from open-ended cost escalation. If a vendor cannot clearly explain what causes projects to go over budget, they have never seriously considered your risk exposure.3. GTS vs Typical AI Development Vendors: A Transparent ComparisonThe following uses GTS as a reference point, addressing each of the 8 questions above item by item, to serve as a benchmark when evaluating other vendors.1.Source code ownership: 100% transferred to the client upon final delivery, with no licensing dependencies retained.2.Private deployment: Full on-premise deployment capability, supporting GPT-5, DeepSeek-V3, Stable Diffusion, and proprietary multi-agent workflow engines on client-controlled infrastructure.3.Delivery timeline: Well-scoped AI application development projects delivered to MVP within 30 days, with milestone checkpoints set from day one of the contract.4.Regulatory credentials: Direct delivery experience under Hong Kong SFC, HKMA, PDPO, and eHealth integration frameworks.5.Legacy system integration: Demonstrated API integration delivery across SAP, Oracle, HMS, and custom legacy systems, spanning financial services, healthcare, and industrial IoT.6.Multi-model capability: Proprietary AI Agent development engine and workflow engine integrating GPT-5, DeepSeek-V3, Stable Diffusion, and open-source models — selected by use case, not by vendor preference.4. How Non-Technical Executives Can Evaluate an AI Vendor's Technical CapabilityYou do not need to understand Transformer architecture to evaluate an AI development company's capability. What you need is to request evidence, not explanations.Ask for anonymised case studies from comparable enterprise clients in Hong Kong or the Asia-Pacific region. Request a direct conversation with a reference client before signing. Review the technical architecture document proposed by the vendor before the contract is signed — any serious vendor will produce this during the scoping phase. If a vendor refuses to provide concrete evidence of prior delivery, that refusal is itself the answer.5. Frequently Asked QuestionsQ: Is it better to choose a local Hong Kong AI development company or an international firm? For most Hong Kong enterprises, a local vendor with verifiable regulatory experience holds a structural advantage in compliance-sensitive projects. International firms offer scale, but rarely possess working knowledge of SFC, HKMA, or PDPO in practice. When data residency and local compliance are non-negotiable prerequisites, local Hong Kong AI development company expertise is not a preference — it is a hard requirement.Q: How do we protect enterprise data during the custom AI development process? Before any development work begins, require the vendor to sign a detailed data processing agreement. Specify that all development and testing in the initial phases is conducted in isolated environments using no production data. For the most sensitive use cases, insist on private deployment architecture from the very first line of code.Q: Can a Hong Kong AI development company serve Greater Bay Area clients? Yes — and this demand is increasingly common. GTS serves enterprise clients across Hong Kong and the Greater Bay Area, with systems and content supporting English, Traditional Chinese, and Simplified Chinese environments. AI Agent development and workflow automation deployments have spanned both jurisdictions.Q: What core clauses must I insist on in an AI application development contract? Four clauses are non-negotiable: complete source code and intellectual property transfer upon final delivery; clearly defined acceptance criteria that trigger final payment; a post-delivery support SLA with committed response times; and a change request management procedure that prevents open-ended scope creep from driving up costs.ConclusionGTS answers yes to all 8 questions above. We deliver enterprise AI application development and AI Agent development solutions within 30 days, guarantee full source code ownership for clients, support private deployment, and have deep delivery experience under Hong Kong's SFC, HKMA, PDPO, and eHealth frameworks.If you are currently evaluating AI development vendors, we welcome you to bring these 8 questions directly to your first conversation with GTS — we commit to providing written responses to each one. Contact GTS's AI advisory team to schedule a consultation.This article, "How to Choose a Custom AI Development Company in Hong Kong: 8 Questions Every Enterprise Must Ask Before Signing a Contract" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-48/

Enterprise Quantitative Trading System Development Guide: Efficient Financial System Solutions

Amid the ongoing digitalization of Hong Kong's financial markets and accelerating global capital flows, Quantitative Trading System Development is no longer merely a technical upgrade. It has become a key strategic approach for firms to improve trading efficiency, strengthen risk management, and reduce long-term operational costs. Whether it is brokerages, asset management companies, or diversified fintech enterprises, establishing a high-performing and robust trading system has become crucial for maintaining competitiveness and managing market volatility.This article will comprehensively analyze practical considerations for Hong Kong-based enterprises implementing Quantitative Trading System Development, covering market demand, system architecture, robot integration, regulatory compliance, and enterprise-level Financial System Solutions.1. Why Hong Kong Enterprises Require Professional Quantitative Trading System DevelopmentAs an international financial hub, Hong Kong boasts large-scale markets and rapid capital mobility while being strictly regulated by the Securities and Futures Commission (SFC) and the Hong Kong Monetary Authority (HKMA). With rising cross-market trading demands, the proliferation of high-frequency trading strategies, and the growing complexity of multi-asset transactions, manual operations alone can no longer satisfy requirements for low-latency execution, real-time risk management, and precise strategy implementation.Enterprises commonly face:(1)Integration challenges across multiple markets and asset classes(2)Insufficient system stability under high trading volumes(3)Increased compliance reporting and audit traceability pressuresProfessional Quantitative Trading System Development helps firms build scalable technical infrastructure, enabling real-time market data processing, intelligent order routing, and closed-loop risk management, significantly reducing operational errors and latency risks.2. Core Architecture and Design Principles of Enterprise-Level Quantitative Trading SystemsThe architecture of enterprise-grade trading systems must balance high performance, low latency, and scalability. Core components typically include:Market Data EngineHigh-Performance Matching EngineOrder Management System (OMS)Clearing and Settlement ModulesAccount and Funds Management SystemReal-Time Risk Control ModuleFrom a technical perspective, distributed microservices architecture and event-driven design (Event-Driven Architecture) are key to ensuring high availability and synchronous trading risk control. These architectures avoid single points of failure and support multi-market, multi-strategy operations. Strategy version management, sandbox testing, and audit logging are essential to system stability and compliance.As emphasized in our previous article, “Fintech Trading System Development Explained: A Practical Guide for Enterprises“, enterprise-grade platforms must balance execution efficiency with risk control loops to remain competitive in Hong Kong and US equity and derivative markets.3. Enterprise-Level Automated Forex Trading Robots Integration and Strategy ExecutionFor enterprises involved in forex or cross-border trading, Automated Forex Trading Robots serve as core strategy execution tools. However, a single robot cannot independently form a complete trading system. Its performance relies on integration with market data sources, liquidity providers, risk engines, matching systems, and backtesting monitoring modules.Enterprises can use strategy version management, sandbox testing, and event-driven design to ensure system upgrades do not affect live trading stability. Additionally, adaptive position adjustments and strategy optimization features help reduce risk and improve returns in volatile markets.4. Regulatory Compliance, Risk Management, and Financial System Solutions ImplementationGiven Hong Kong’s strict regulatory environment, enterprises conducting Quantitative Trading System Development must comprehensively address compliance and risk management. According to SFC's Guidelines for the Regulation of Automated Trading Services and HKMA regulatory policies, enterprises should establish:Comprehensive risk management and internal control mechanismsPre- and post-trade monitoring proceduresSystem testing and version management policiesAt the same time, embedding Financial System Solutions, including KYC, AML, trade surveillance, audit tracking, full logging, and stress-testing procedures, ensures compliance and operational sustainability. These measures enable enterprises to maintain trading efficiency while mitigating regulatory risk and achieving long-term operational resilience.(Note: The above regulatory documents are all publicly available information. Companies should conduct professional legal assessments based on their own license categories and business scope.)Efficient and robust Quantitative Trading System Development has become a cornerstone for Hong Kong enterprises to maintain competitiveness in financial markets. From market analysis and system architecture design to Automated Forex Trading Robots integration, risk control, and compliance implementation, each step directly impacts trading efficiency and long-term returns. Partnering with experienced technical providers such as GTS, which offers end-to-end customization from market data processing and matching engines to clearing, settlement, CRM, and fund management, enables enterprises to build a sustainable and evolving trading platform while controlling costs.If your enterprise wants to learn how to build a Quantitative Trading System Development platform compliant with Hong Kong market regulations, click here to explore enterprise-level solutions and dedicated technical support.This article, "Enterprise Quantitative Trading System Development Guide: Efficient Financial System Solutions" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-47/

Industrial IoT System Setup: Architecture Design, Implementation Process and Scalable Industrial IoT Solutions

In the digital transformation of manufacturing and large industrial enterprises, Industrial IoT system setup is gradually becoming a critical foundation for building smart manufacturing and data-driven operations. By integrating equipment, sensors, and enterprise management systems, companies can monitor equipment status, production efficiency, and operational data in real time, while improving overall decision-making capabilities through data analytics.However, many organizations face a common challenge when planning an IIoT platform: how to ensure system stability and security while building an architecture that supports long-term scalability. This article explores the key considerations enterprises should focus on when implementing Industrial IoT systems, covering architecture design, implementation processes, and vendor selection.1. What Is Industrial IoT System Setup?Industrial IoT system setup refers to integrating industrial equipment with enterprise information systems through device connectivity, data platforms, and intelligent analytics technologies. The goal is to build a technical architecture capable of continuously collecting, processing, and analyzing equipment data.Compared with general IoT applications, IIoT systems emphasize the following characteristics:Industrial-grade system stabilityHighly secure device communicationIntegration with enterprise IT systemsLong-term scalability and multi-device managementIn practical applications, enterprises often connect PLC, SCADA, MES, or ERP systems to an IoT platform so that equipment data can be transformed into actionable operational insights. This approach not only improves equipment visibility but also supports high-value applications such as predictive maintenance, smart manufacturing, and energy management.2. Core Components of an Industrial IoT System ArchitectureMost enterprise-level systems can be divided into four primary layers:1. Device and Sensor LayerThis layer collects operational data from industrial equipment, including sensors, PLC controllers, and various industrial devices. Common communication protocols include Modbus, OPC UA, and MQTT.2. Edge Computing LayerEdge devices process and filter data locally within the factory environment, reducing the burden of cloud transmission while improving system responsiveness. This is particularly important for industrial scenarios requiring real-time monitoring and control.3. Data Platform and Integration LayerThis layer forms the core of enterprise Industrial IoT solutions, primarily responsible for device management, data storage and analytics, API integration with enterprise systems, and IoT platform service management.4. Application and Decision LayerThrough data dashboards, AI analytics, and operational visualization tools, managers can monitor equipment conditions, production performance, and key operational indicators in real time, improving enterprise decision-making efficiency.3. Implementation Process of Industrial IoT System SetupWhen deploying IIoT systems, enterprises are generally advised to adopt a phased approach rather than implementing the entire platform at once. The process typically includes the following stages:1. Requirement Analysis and Application Scenario DefinitionPredictive maintenance for equipmentProduction efficiency optimizationEnergy monitoring and managementProduction process data integration2. System Architecture Planning and Technology SelectionEdge device and network architectureIndustrial communication protocolsIoT platform technologiesData storage and analytics approaches3. Platform Development and System IntegrationDuring this phase, equipment data is connected to the platform and integrated with ERP, MES, or data warehouse systems so that operational data can directly support enterprise management and decision-making.4. Pilot Deployment and Gradual ExpansionIt is recommended to first deploy a pilot system on a single production line or equipment group before gradually expanding to the entire factory or enterprise.4. Common Challenges in Industrial IoT DeploymentIn real-world projects, enterprises often encounter various technical and management challenges when implementing IIoT systems, such as:Inconsistent communication protocols across devicesLegacy equipment lacking data interfacesIntegration difficulties between IT and OT systemsIndustrial network security concernsInsufficient platform scalabilityIf these issues are not properly addressed during the early planning stage, they may affect the overall success of the system deployment.For a deeper understanding of common integration challenges in IIoT projects, you may refer to our previous analysis article: “Industrial IoT Integration Development: What Challenges Do Enterprises Most Commonly Face?” which outlines typical issues in device integration and platform architecture planning.5. How to Choose the Right Industrial IoT Solution ProviderFor most enterprises, an IIoT platform is a long-term investment, making the selection of the right technology partner critically important. When evaluating potential vendors, companies should focus on the following capabilities:Experience in large-scale device integrationAbility to provide customized IoT platform architecturesEnterprise-level PaaS capabilitiesFamiliarity with manufacturing operationsFor example, technology teams such as GTS that specialize in enterprise-level IIoT system development typically design complete IoT platforms based on an organization’s overall data architecture. Through customized system development, they help enterprises build a digital foundation that enables smart equipment management, data-driven operations, and visualized decision-making.If your organization is planning an IIoT platform or evaluating its Industrial IoT system setup strategy, consider discussing real application scenarios with technical experts. GTS can assist in planning a complete IIoT platform architecture and customized system development solutions, enabling enterprises to gradually achieve industrial digital transformation through scalable deployment.6. Frequently Asked Questions (FAQ)What core technologies are required for Industrial IoT system setup?These typically include device communication protocols, edge computing, IoT platform architecture, cloud-based data analytics, and enterprise system integration technologies.Do IIoT systems require replacing existing equipment?Not necessarily. Many enterprises can connect legacy equipment to an IoT platform using sensors and edge devices, gradually enabling equipment data integration.Does an Industrial IoT platform require customized development?For medium-to-large enterprises, customized platforms usually better match existing equipment environments and enterprise system architectures. As a result, many IIoT projects adopt customized development approaches.As manufacturing and industrial enterprises continue advancing toward smart manufacturing and digital transformation, Industrial IoT system setup has become a key foundation for improving operational efficiency and data-driven decision-making. With well-designed architectures, clear implementation processes, and the right technology partners, enterprises can establish stable and scalable IIoT platforms and gradually move toward truly data-driven intelligent operations.This article, "Industrial IoT System Setup: Architecture Design, Implementation Process and Scalable Industrial IoT Solutions" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-46/

HMS Hospital Management System Implementation Guide: Architecture Design, System Integration, and AI-Driven Clinical Management Upgrade

With the continuous increase in healthcare service demand in Hong Kong, along with the growing specialization of medical disciplines and the widespread operation of multi-hospital networks, healthcare institutions are facing unprecedented pressure in information management. When outpatient, inpatient, laboratory, imaging, and pharmacy systems operate in isolation for long periods, it not only creates data silos but also directly affects clinical efficiency and management decision-making.For hospital leadership, building a scalable HMS Hospital Management System Implementation architecture is no longer simply an IT upgrade, but a fundamental step toward driving comprehensive digital healthcare transformation.1. Why HMS Hospital Management System Implementation Is Becoming the Core of Healthcare Digital TransformationMany healthcare institutions initially approach digitalization by implementing single application systems, such as outpatient appointment systems or Electronic Medical Records (EMR). However, as institutions expand and service workflows become increasingly complex, fragmented IT systems often lead to inconsistent data and declining operational efficiency.As a result, more healthcare organizations are re-evaluating their overall healthcare IT architecture and integrating different modules into a unified platform through HMS Hospital Management System Implementation. Through centralized data management and coordinated workflows, healthcare institutions can more effectively:Improve the efficiency of clinical information flowEstablish comprehensive patient lifecycle data managementStrengthen healthcare operational data analytics capabilitiesSupport cross-hospital and cross-department collaborationFrom a long-term perspective, HMS is not only a management tool but also a critical infrastructure for healthcare data governance and decision analytics.2. Core Architecture of Modern HMS PlatformsFrom a technical perspective, modern healthcare information platforms are gradually shifting from monolithic systems toward modular and microservices architectures. This approach allows healthcare institutions to continuously expand or upgrade functional modules without interrupting core systems.A comprehensive HMS platform typically includes the following core systems:Electronic Medical Records (EMR)Laboratory Information System (LIS)Picture Archiving and Communication System (PACS)Pharmacy and inventory managementPatient relationship management (CRM)These modules are interconnected through a unified data model and the Master Patient Index (MPI), ensuring data consistency across different systems.If healthcare institutions are evaluating system development strategies, factors such as cost structure and integration planning are just as important as technical architecture. In another article, “Hospital Management System Development Guide: A Comprehensive Analysis of Costs, Integration, and Strategy for Hong Kong Healthcare Institutions” we provide an in-depth analysis of common budgeting and deployment considerations during healthcare system implementation, which can serve as an important reference during the early planning phase.3. Clinical Healthcare Management System Development: From Clinical Workflows to Intelligent AutomationIn healthcare environments, the real value of information systems ultimately lies in supporting clinical workflows. In recent years, many organizations planning Clinical Healthcare Management System Development have shifted their focus toward the actual working processes of healthcare professionals rather than simply adding more system modules.Through workflow-oriented system design, HMS platforms can support various clinical optimizations, including:Automated physician consultation workflowsAI voice medical record inputSOAP-structured clinical documentation generationClinical Decision Support Systems (CDSS)These technologies can significantly reduce the time physicians spend on administrative documentation, allowing them to focus more on clinical care while simultaneously helping healthcare institutions accumulate high-quality clinical data.4. System Integration Capabilities: How FHIR and HL7 Enable Healthcare InteroperabilityIn healthcare IT environments where multiple systems coexist, interoperability is a key factor influencing the overall value of the system. Without unified data standards, significant manual processing and data transformation are often required between systems.To address this issue, the global healthcare IT industry widely adopts standards such as FHIR R4 and HL7 as the foundation for healthcare data exchange. Through these standards, HMS platforms can enable:Cross-system medical record integrationAutomatic synchronization of laboratory and imaging dataThird-party medical device integrationHealthcare data sharing and analyticsFor healthcare institutions in Hong Kong, a highly interoperable system architecture can also lay the groundwork for future healthcare information connectivity.5. How Smart Healthcare Solutions Are Reshaping Healthcare Operational EfficiencyAs artificial intelligence technologies continue to mature, healthcare information platforms are entering an intelligent phase. By integrating AI analytics with operational data, Smart Healthcare Solutions help healthcare institutions improve efficiency across multiple dimensions, such as:Intelligent outpatient schedulingPatient flow forecastingClinical risk predictionHealthcare resource allocation analysisThese capabilities allow management teams to gain faster insight into operational performance and make strategic decisions based on data rather than relying solely on experience.In real-world implementation, healthcare institutions often require experienced technology partners to assist in system architecture design and long-term platform upgrades. For example, GTS provides enterprise-grade HMS platform customization services for healthcare institutions in Hong Kong, combining microservices architecture, FHIR/HL7 interoperability standards, and AI voice medical record technologies to support the complete medical workflow from outpatient services to inpatient care.If your organization is planning the next phase of HMS Hospital Management System Implementation, it is recommended to begin with a comprehensive evaluation of existing IT infrastructure and clinical workflows. Through systematic planning and professional technical support, healthcare institutions can enhance clinical efficiency while building a digital healthcare platform with long-term scalability.This article, "HMS Hospital Management System Implementation Guide: Architecture Design, System Integration, and AI-Driven Clinical Management Upgrade" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-45/

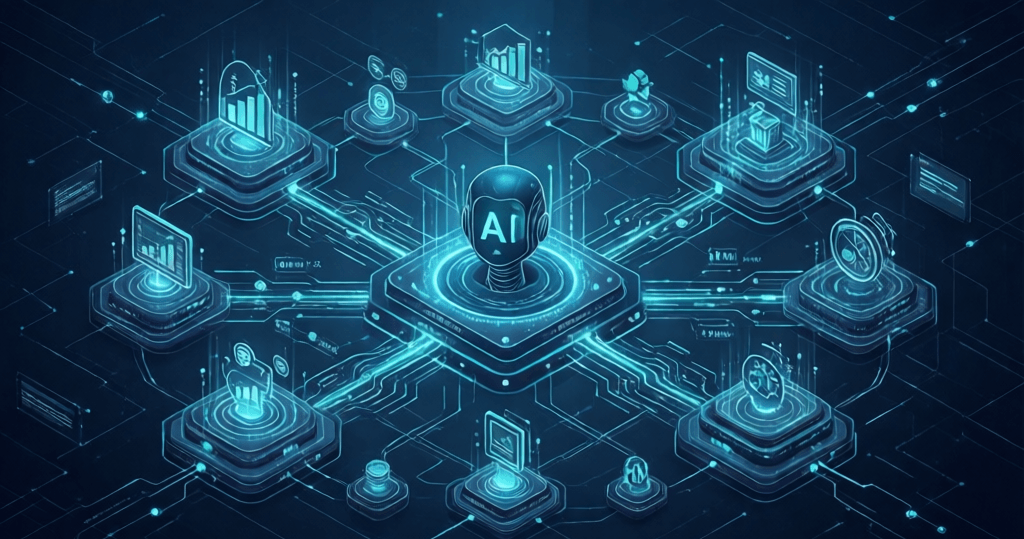

How Enterprise AI Applications and Solutions Drive Business Transformation

Against the backdrop of the rapid development of the digital economy, artificial intelligence has gradually evolved from a technical concept into a fundamental capability for enterprise operations. More and more organizations are beginning to explore how Enterprise AI Development can help them build sustainable AI application ecosystems, thereby improving operational efficiency, optimizing decision-making models, and establishing long-term advantages in increasingly competitive markets.From intelligent customer service and automated content generation to enterprise knowledge management and operational analytics, AI technologies are penetrating multiple core areas of modern organizations. However, the real value of AI for enterprises does not lie in a single tool, but in a continuously evolving enterprise-grade AI solution supported by a complete AI technology architecture.1. Where Should Enterprises Begin with AI? Understanding the Business Value of Generative AIWhen exploring AI, many organizations start with a single application scenario such as content generation, customer service responses, or document summarization. These applications can quickly demonstrate the potential of generative AI, but for business leaders, it is more important to understand the role AI plays across the entire operational workflow.In practical applications, generative AI can help organizations improve knowledge processing efficiency. For example, enterprises can build intelligent knowledge bases, optimize document search capabilities, and accelerate report generation. Through well-designed system integration and workflow design, AI can not only reduce repetitive tasks but also allow organizations to focus their resources on strategic decision-making and business innovation.Therefore, the first step in adopting AI is not selecting a model, but clarifying business objectives and identifying the specific problems AI should solve within the organization.2. How Enterprises Turn AI Applications into Real Business CapabilitiesAfter completing initial experimentation, the next key challenge for enterprises is how to integrate AI applications into daily operational workflows. This is where Enterprise AI Application Development demonstrates its core value.Unlike simply using AI tools, enterprise-level deployment typically requires the integration of multiple systems, including CRM, ERP, internal document systems, and data analytics platforms. Through APIs, RAG (Retrieval-Augmented Generation) architecture, and AI Agent technologies, organizations can build automated workflows, such as:Automatic organization and classification of internal documentsReal-time responses to customer inquiriesAssistance in operational data analysisProviding decision-making insights for managementWhen AI becomes deeply integrated with existing enterprise systems, its role evolves from a standalone tool into a core part of business capabilities.3. Why Enterprises Need Customized AI Architectures Instead of Generic ToolsWith the rapid advancement of AI technologies, the market now offers a wide range of SaaS tools and general-purpose AI platforms. However, these tools often struggle to fully meet an organization’s internal workflows and data requirements.As enterprises place increasing emphasis on data security, business process integration, and long-term technological capabilities, more organizations are shifting from general AI tools to customized system development. Standardized tools may be quick to deploy, but they often fail to align with complex internal workflows and data structures. We previously explored this issue in detail in the article “Why Enterprises Need Custom AI Solutions Instead of Off-the-Shelf Tools” where we analyzed common misconceptions and considerations in enterprise AI investment decisions.Through customized enterprise AI architectures, organizations can build proprietary model knowledge bases, workflows, and security policies, ensuring that AI systems not only meet business needs but also comply with regulatory and data governance requirements.4. Enterprise AI Platformization: From Workflows to Large Model ArchitectureAs AI application scenarios continue to grow, enterprises typically need to build a unified AI platform to manage models, data, and applications. This platform-based approach can improve system efficiency while reducing future expansion costs.A typical enterprise AI platform usually includes several core components:Large model management and multi-model collaboration mechanismsEnterprise knowledge bases and RAG search architectureAI Agents and automated workflowsAccess control and data security mechanismsDuring the platformization process, choosing the right technology partner is equally critical. Organizations need to evaluate whether vendors possess comprehensive system integration capabilities, AI architecture design experience, and long-term technical support capacity to ensure that the overall Enterprise AI Solutions remain stable and continuously optimized.If organizations lack sufficient internal technical resources, collaborating with a professional AI system development team can often accelerate successful implementation.5. Long-Term Strategy for AI Transformation: Enterprise AI Investment and Market TrendsFrom a global technology perspective, enterprise AI investment is gradually shifting from isolated applications to holistic platform development. In the future, the key factor in enterprise competitiveness will not simply be whether AI is used, but whether organizations possess the technological architecture to continuously develop AI capabilities.In this process, Enterprise AI Development will become an essential infrastructure for enterprise digital transformation. Through well-planned architecture design and long-term technology strategies, organizations can gradually build their own AI capabilities and continue creating value across different business scenarios.As a technology service provider specializing in enterprise system development, GTS has long supported large organizations and institutions with customized AI system development and architecture design services. We have extensive experience in enterprise AI application development, model knowledge base construction, AI Agent workflow design, and private deployment. Our team helps enterprises build sustainable AI capabilities that can evolve alongside business growth.Organizations are welcome to connect with our consulting team to discuss their application scenarios and business requirements. Based on industry characteristics and technical conditions, we provide practical AI architecture recommendations and implementation strategies.This article, "How Enterprise AI Applications and Solutions Drive Business Transformation" was compiled and published by GTS Enterprise Systems and Software Development Service Provider. For reprint permission, please indicate the source and link: https://www.globaltechlimited.com/news/post-id-44/

- 1

- 2

- 3

- 4

- 5

- 6

- 7

Recommended Reading